As generative AI applications grow, a new type of database has become essential: the vector database.

Traditional databases store structured data such as numbers, text fields, and records. But AI systems — especially Large Language Models (LLMs) — do not retrieve information through keywords. They retrieve information based on meaning.

This is where vector databases come in.

They enable semantic search, AI memory, recommendation systems, and Retrieval-Augmented Generation (RAG). Without them, modern AI assistants, chatbots, and document intelligence platforms would struggle to provide accurate, context-aware responses.

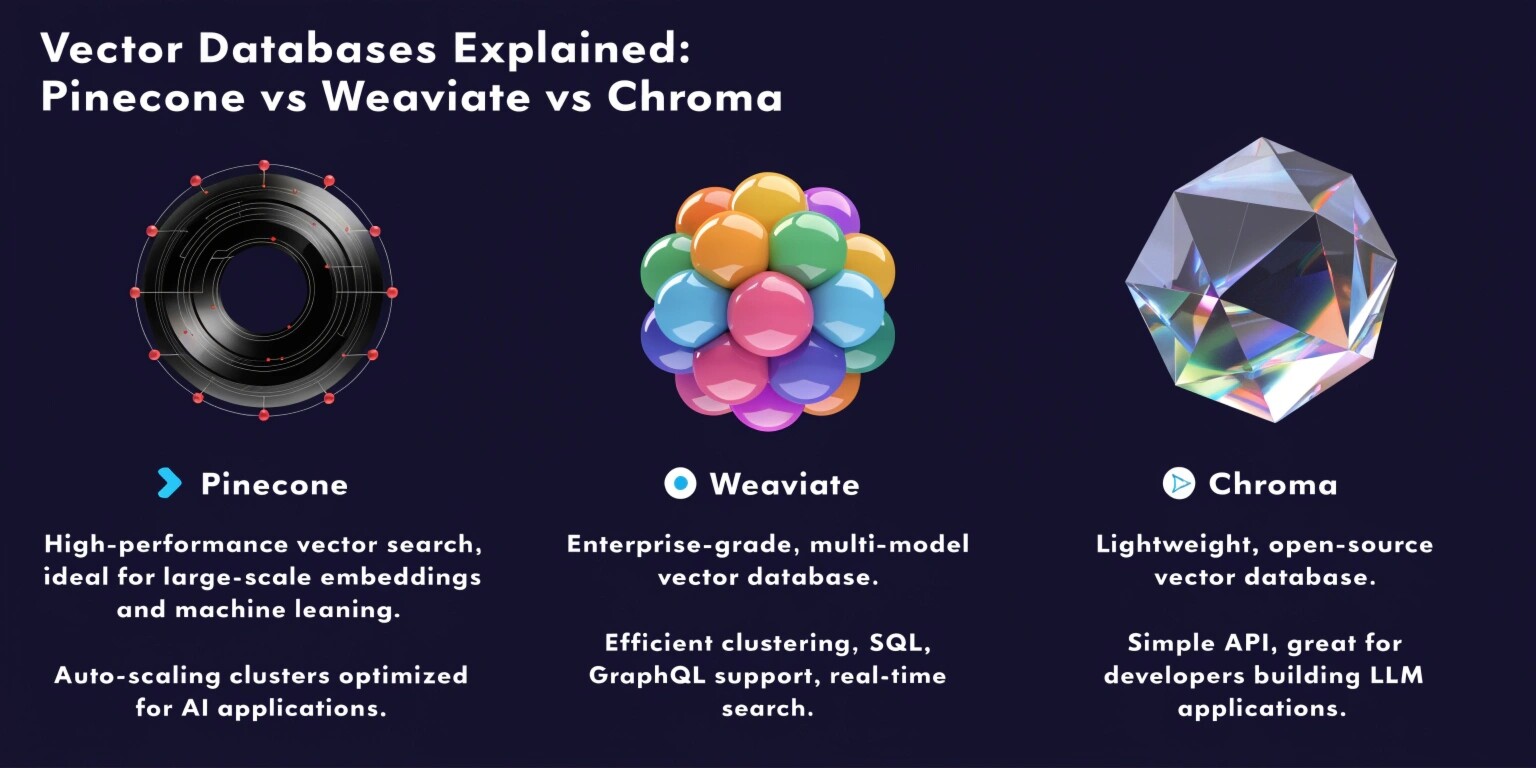

Let’s understand how vector databases work and compare three popular options: Pinecone, Weaviate, and Chroma.

What is a Vector Database?

When an AI model processes text, image, or audio, it converts it into a mathematical representation called an embedding — a list of numbers that represents meaning.

Example:

Two sentences like

“reset my password”

and

“I can’t log into my account”

may not share keywords, but they have similar meaning. Embeddings place them close together in a multi-dimensional space.

A vector database stores these embeddings and retrieves the closest matches using similarity search instead of exact matching.

Why This Matters

LLMs do not “know” your company’s internal documents. A vector database allows the model to fetch relevant context before answering — forming the foundation of RAG systems.

Pinecone

Pinecone is a fully managed, cloud-native vector database designed for production AI applications.

Key Features:

- Managed infrastructure

- High scalability

- Automatic indexing

- Low latency retrieval

- Metadata filtering

- Enterprise-ready reliability

Strengths:

Pinecone focuses on performance and ease of use. You don’t manage servers, shards, or scaling. It handles distributed indexing automatically.

Best Use Cases:

- Production chatbots

- SaaS AI platforms

- Enterprise knowledge assistants

- High-traffic applications

Limitation:

It is proprietary and paid. Less control over internal configuration compared to open-source systems.

Weaviate

Weaviate is an open-source vector database with built-in AI modules and hybrid search capabilities.

Key Features:

- Open source

- GraphQL API

- Hybrid search (keyword + semantic)

- Built-in vectorization modules

- Schema-based data modeling

Strengths:

Weaviate is more than a vector store — it acts like a knowledge graph plus vector search. It supports filtering, relationships, and structured queries along with semantic retrieval.

Best Use Cases:

- Enterprise document search

- Recommendation systems

- E-commerce personalization

- AI-powered search engines

Limitation:

Requires infrastructure setup and DevOps management if self-hosted.

Chroma

Chroma is a lightweight, developer-friendly vector database optimized for local development and rapid prototyping.

Key Features:

- Simple Python integration

- Local persistence

- Fast setup

- Ideal for RAG experimentation

- Tight integration with LLM frameworks

Strengths:

Extremely easy to use. Many developers use Chroma for proof-of-concepts, demos, and MVP AI apps.

Best Use Cases:

- Local AI assistants

- Prototypes

- Hackathons

- Small-scale RAG apps

Limitation:

Not ideal for high-scale production workloads.

Comparison

FeaturePineconeWeaviateChromaTypeManaged cloudOpen-sourceLightweight localScalabilityVery highHighLow–MediumSetupEasiestModerateVery easyCostPaidFree + hostingFreeBest ForProduction AIFlexible enterprisePrototyping

How Vector Databases Power RAG

In a Retrieval-Augmented Generation pipeline:

- Documents are converted into embeddings.

- Stored in vector database.

- User asks a question.

- System retrieves similar documents.

- LLM generates grounded response.

Without vector retrieval, LLMs hallucinate more often because they rely only on training data.

Choosing the Right Database

Choose Pinecone if:

- You need production reliability

- You want minimal DevOps

- You have enterprise traffic

Choose Weaviate if:

- You need flexibility and filtering

- You want open-source control

- You’re building search platforms

Choose Chroma if:

- You are prototyping

- You want local testing

- You’re building MVPs

Future of Vector Databases

Vector databases are becoming as important as relational databases in the AI era. Nearly every AI product — from coding assistants to recommendation engines — now depends on embedding retrieval.

They are the memory layer of modern AI systems.

Final Thoughts

Pinecone, Weaviate, and Chroma are not competitors in every situation — they serve different stages of the AI lifecycle.

Chroma helps you start.

Weaviate helps you customize.

Pinecone helps you scale.

The right choice depends on your product maturity, traffic, and operational capabilities. Understanding these trade-offs is essential when building real-world AI applications.