Scaling laws in deep learning have fundamentally changed how researchers design and train large language models (LLMs). Instead of relying on intuition or trial-and-error, scaling laws provide mathematical relationships that predict how model performance improves with increases in compute, data, and parameters.

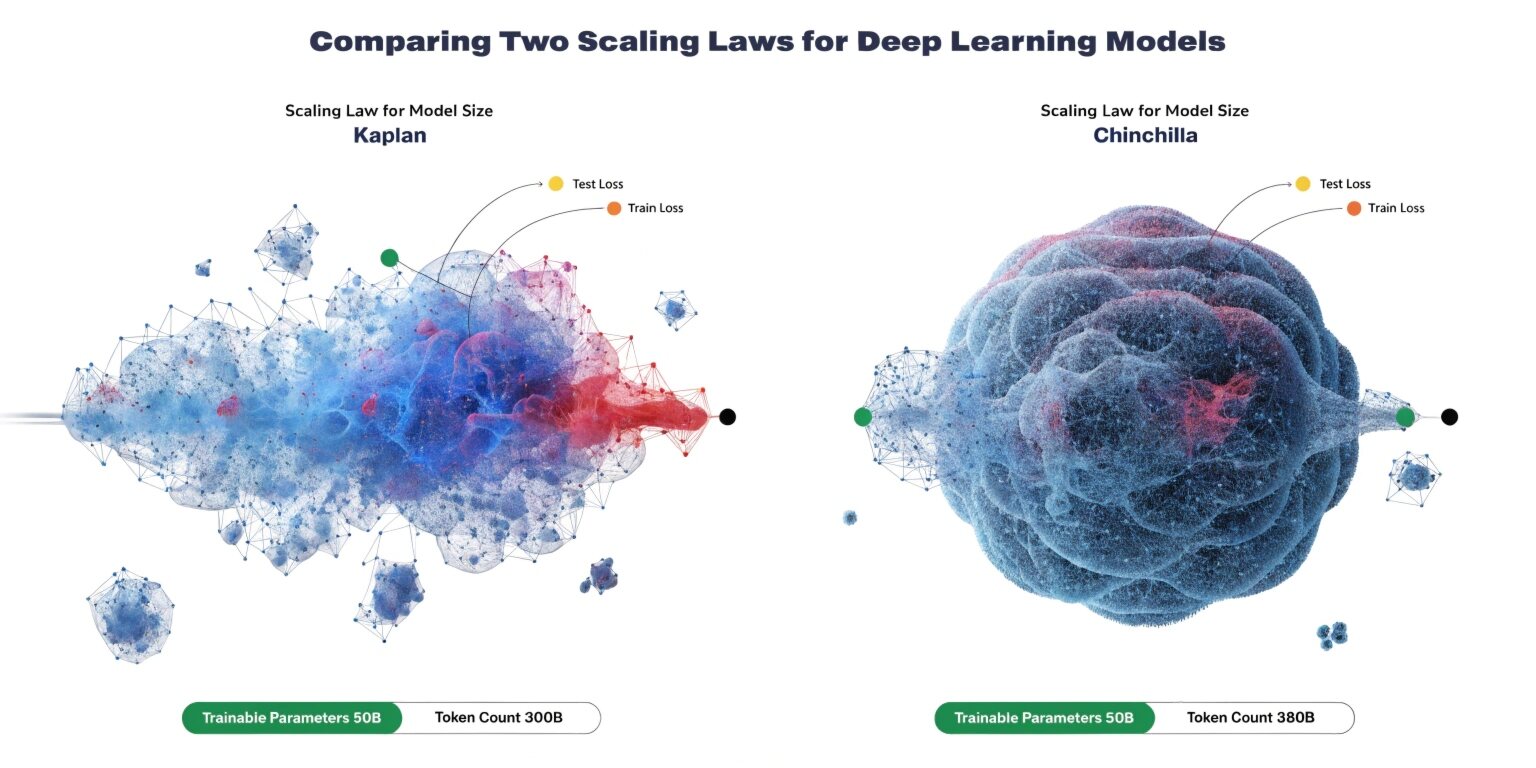

Two of the most influential approaches in this domain are the Kaplan scaling laws and the Chinchilla scaling laws. While both aim to optimize performance, they differ significantly in how they balance model size and training data.

Understanding Scaling Laws in Deep Learning

Scaling laws describe how a model’s loss decreases as a function of three primary factors:

- Model parameters (size)

- Training dataset size

- Compute budget

These relationships often follow power-law distributions, meaning improvements diminish as scale increases, but remain predictable.

Early research suggested that simply increasing model size would consistently improve performance. This idea formed the basis of Kaplan’s approach.

Kaplan Scaling Laws: Bigger Models, Better Performance

The Kaplan scaling laws, introduced by researchers at OpenAI, emphasized that model performance improves primarily by increasing the number of parameters.

L∝N−αL \propto N^{-\alpha}L∝N−α

Here, LLL represents loss, NNN is the number of parameters, and α\alphaα is a scaling exponent. The implication is clear: larger models tend to perform better.

Key characteristics of Kaplan scaling:

- Focus on increasing model size

- Training data grows, but not proportionally

- Compute is heavily invested in larger architectures

This approach led to the development of massive models with billions (and later trillions) of parameters. However, it introduced inefficiencies—many models were undertrained relative to their size.

The Limitation of Kaplan’s Approach

While Kaplan scaling showed strong results, it assumed that increasing parameters was the most effective way to use compute. In practice, this led to:

- High training costs

- Underutilized model capacity

- Inefficient data usage

Researchers began questioning whether the same compute budget could be used more effectively.

Chinchilla Scaling Laws: Optimal Balance Wins

The Chinchilla scaling laws, proposed by DeepMind, challenged Kaplan’s assumptions. Instead of prioritizing model size, Chinchilla emphasized balancing model parameters and training data.

L∝(N−α+D−β)L \propto (N^{-\alpha} + D^{-\beta})L∝(N−α+D−β)

Here, DDD represents dataset size. The key insight is that both model size and data contribute equally to performance.

Chinchilla’s findings revealed that:

- Many large models were undertrained

- Increasing data can be more effective than increasing parameters

- Compute should be split evenly between model size and dataset size

Kaplan vs Chinchilla: Key Differences

1. Resource Allocation

- Kaplan: Prioritizes model parameters

- Chinchilla: Balances parameters and data

2. Compute Efficiency

- Kaplan: High compute spent on large models

- Chinchilla: Optimized compute usage

3. Training Strategy

- Kaplan: Fewer tokens per parameter

- Chinchilla: More tokens per parameter

4. Practical Impact

- Kaplan: Led to extremely large but inefficient models

- Chinchilla: Produces smaller, better-trained models with superior performance

Why Chinchilla Matters Today

Chinchilla scaling has reshaped how modern LLMs are built. Instead of blindly increasing model size, organizations now focus on compute-optimal training.

This has several advantages:

- Lower infrastructure costs

- Faster training cycles

- Better generalization

- Reduced environmental impact

For companies building AI products, this shift is critical. It allows them to achieve better performance without requiring massive computational resources.

Real-World Implications for AI Development

The transition from Kaplan to Chinchilla scaling affects multiple areas:

1. Model Design

Engineers now prioritize dataset quality and size alongside architecture design.

2. Training Pipelines

Data pipelines have become as important as model engineering.

3. Cost Optimization

Organizations can achieve similar or better results with smaller models, reducing cloud expenses.

4. Accessibility

Smaller, efficient models make advanced AI more accessible to startups and mid-sized companies.

Future of Scaling Laws

Scaling laws continue to evolve. Researchers are now exploring:

- Multimodal scaling (text, image, video)

- Sparse models and mixture-of-experts architectures

- Data quality vs quantity trade-offs

- Fine-tuning efficiency

The next generation of scaling laws will likely focus not just on size and data, but also on efficiency, adaptability, and sustainability.

Conclusion

The comparison between Kaplan and Chinchilla scaling laws highlights a critical shift in deep learning: bigger is no longer always better.

Kaplan’s work laid the foundation by demonstrating the power of scale, but Chinchilla refined that understanding by showing how to use resources efficiently.

For modern AI systems, the winning strategy is clear—balance model size with sufficient data and optimize compute usage. This approach not only improves performance but also ensures scalability, cost-effectiveness, and long-term sustainability in AI development.