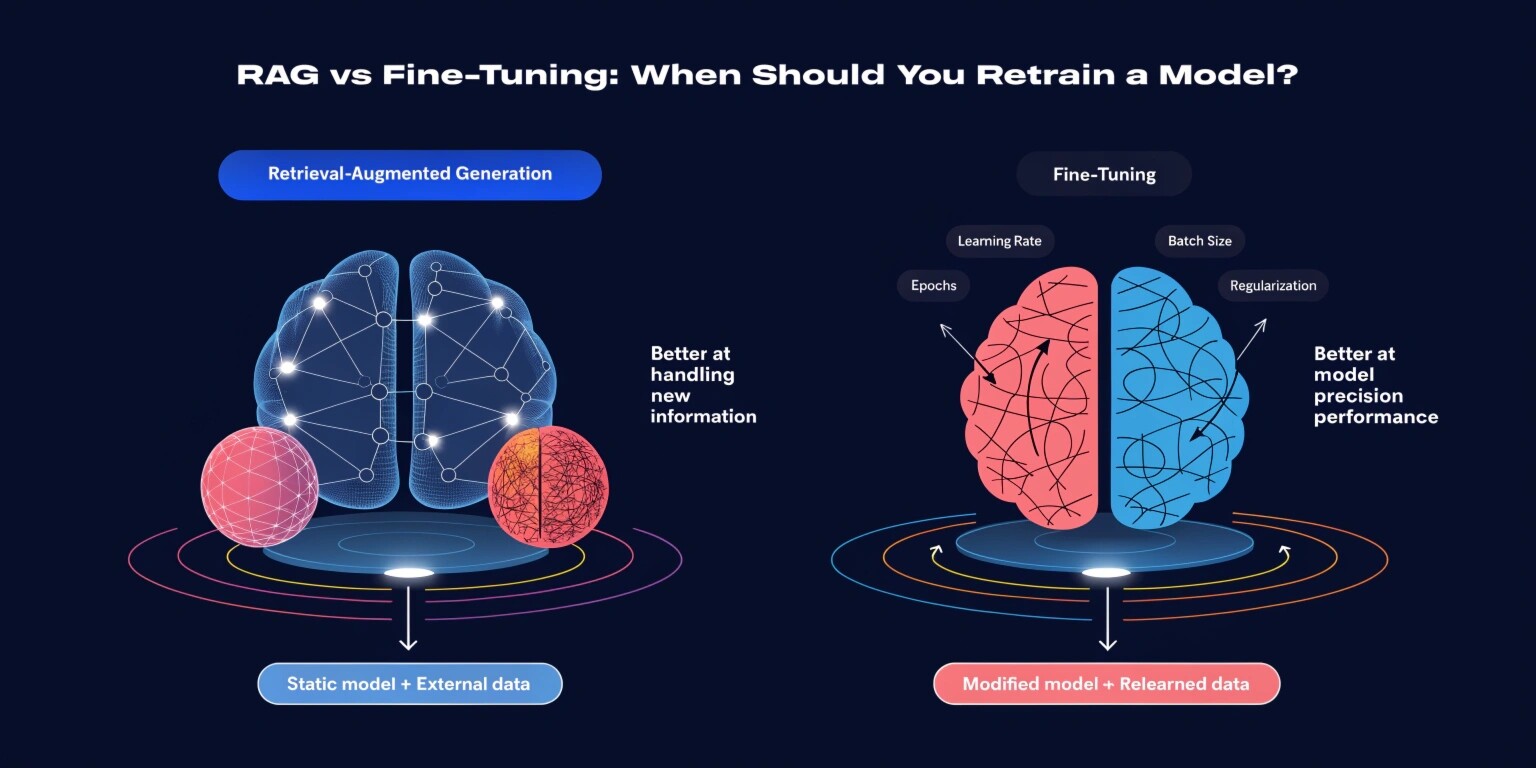

As large language models (LLMs) move from experimentation to production systems, one major architectural decision arises: should you use Retrieval-Augmented Generation (RAG), or should you fine-tune the model?

Both approaches enhance model performance—but they solve different problems. Choosing the wrong strategy can lead to unnecessary costs, scalability bottlenecks, or suboptimal performance.

Let’s break down the differences and understand when retraining is truly necessary.

What is RAG (Retrieval-Augmented Generation)?

Retrieval-Augmented Generation is an architecture where a language model retrieves relevant external information before generating a response. Instead of retraining the model, you connect it to a knowledge base using vector embeddings and similarity search.

How RAG Works:

- User submits a query.

- The system converts it into embeddings.

- A vector database retrieves relevant documents.

- The retrieved context is passed to the LLM.

- The model generates an answer using both the prompt and retrieved data.

Advantages of RAG:

- No model retraining required

- Lower cost compared to fine-tuning

- Easy knowledge updates (just update documents)

- Faster deployment cycles

- Reduced hallucination when grounded properly

Ideal Use Cases:

- Enterprise document search

- Internal knowledge assistants

- Customer support bots

- Policy or compliance-based answers

- Frequently updated data environments

RAG works exceptionally well when the model already understands language well but needs access to domain-specific information.

What is Fine-Tuning?

Fine-tuning involves retraining a base LLM on custom datasets to modify its behavior, tone, or task performance. Instead of adding external knowledge dynamically, you embed new patterns directly into the model weights.

Types of Fine-Tuning:

- Full fine-tuning

- Parameter-efficient fine-tuning (LoRA, adapters)

- Instruction tuning

- Supervised fine-tuning

Advantages:

- Behavior customization

- Improved domain-specific reasoning

- Consistent response style

- Better task specialization

- Lower latency (no retrieval step)

Ideal Use Cases:

- Custom tone or brand voice

- Highly structured outputs

- Domain-specific reasoning tasks

- Complex workflows (legal drafting, medical summaries)

- Proprietary classification systems

Fine-tuning is about changing how the model thinks, not just what it knows.

RAG vs Fine-Tuning: Core Differences

1. Knowledge Updates

- RAG: Update documents instantly.

- Fine-Tuning: Requires retraining cycle.

If your data changes frequently, RAG is superior.

2. Cost

- RAG: Lower upfront cost, infrastructure-based.

- Fine-Tuning: Expensive training and validation.

For startups or MVPs, RAG is often more practical.

3. Performance Consistency

- RAG: Performance depends on retrieval quality.

- Fine-Tuning: More predictable task execution.

For strict format or rule-based outputs, fine-tuning wins.

4. Scalability

- RAG: Scales with better indexing and vector DB.

- Fine-Tuning: Requires model version management.

RAG scales knowledge. Fine-tuning scales behavior.

5. Latency

- RAG: Slightly slower (retrieval step).

- Fine-Tuning: Faster response generation.

For real-time systems with strict latency, fine-tuning may be preferred.

When Should You Use RAG?

Choose RAG if:

- Your knowledge base updates frequently.

- You need factual grounding.

- You are building enterprise search tools.

- You want cost-effective deployment.

- Your base model already performs well.

Example:

An HR chatbot answering policy questions from updated company documents. Retraining every time HR updates policies would be inefficient—RAG is ideal.

When Should You Fine-Tune?

Choose fine-tuning if:

- You need consistent structured outputs.

- The base model fails in domain reasoning.

- You require tone customization.

- You want task specialization.

- Retrieval alone cannot improve reasoning.

Example:

A legal AI drafting contracts in a specific jurisdiction format. The model must internalize legal reasoning patterns—fine-tuning is more appropriate.

Hybrid Approach: The Best of Both Worlds

Modern production systems often combine both:

- Fine-tune the model for behavior and tone.

- Use RAG for dynamic knowledge injection.

This hybrid architecture balances:

- Knowledge freshness

- Behavioral consistency

- Scalability

- Cost control

Many enterprise AI systems follow this layered approach to optimize performance.

Key Decision Framework

Before choosing retraining, ask:

- Is my problem about knowledge or behavior?

- Does my data change frequently?

- Do I need strict output formatting?

- What is my budget?

- How important is latency?

- Can prompt engineering solve it first?

If prompt engineering and RAG solve your problem, avoid retraining. Fine-tuning should be a strategic decision—not the default.

Common Mistakes

- Fine-tuning before validating with RAG.

- Using RAG without optimizing retrieval quality.

- Ignoring evaluation metrics.

- Not testing hybrid models.

- Overfitting small datasets during fine-tuning.

Final Thoughts

RAG and fine-tuning are not competitors—they are complementary tools. RAG enhances knowledge access, while fine-tuning enhances reasoning patterns and behavior.

Retrain a model only when you need behavioral change or deeper specialization. If your challenge is information access, RAG is usually sufficient.

In production AI systems, architectural decisions define scalability, cost efficiency, and long-term maintainability. Choose wisely.