For the past two years, “Prompt Engineering” was one of the hottest skills in AI.

People believed that mastering clever instructions like:

“Act as a senior expert…”

“Think step-by-step…”

“You are the world’s best consultant…”

…was enough to build powerful AI products.

But something important happened.

Real-world AI systems started failing.

Companies discovered that writing smarter prompts does not create reliable software.

And this realization gave birth to a new approach:

Programmatic Prompting.

This does not mean prompt engineering is useless.

It means prompt engineering alone is no longer sufficient for production AI.

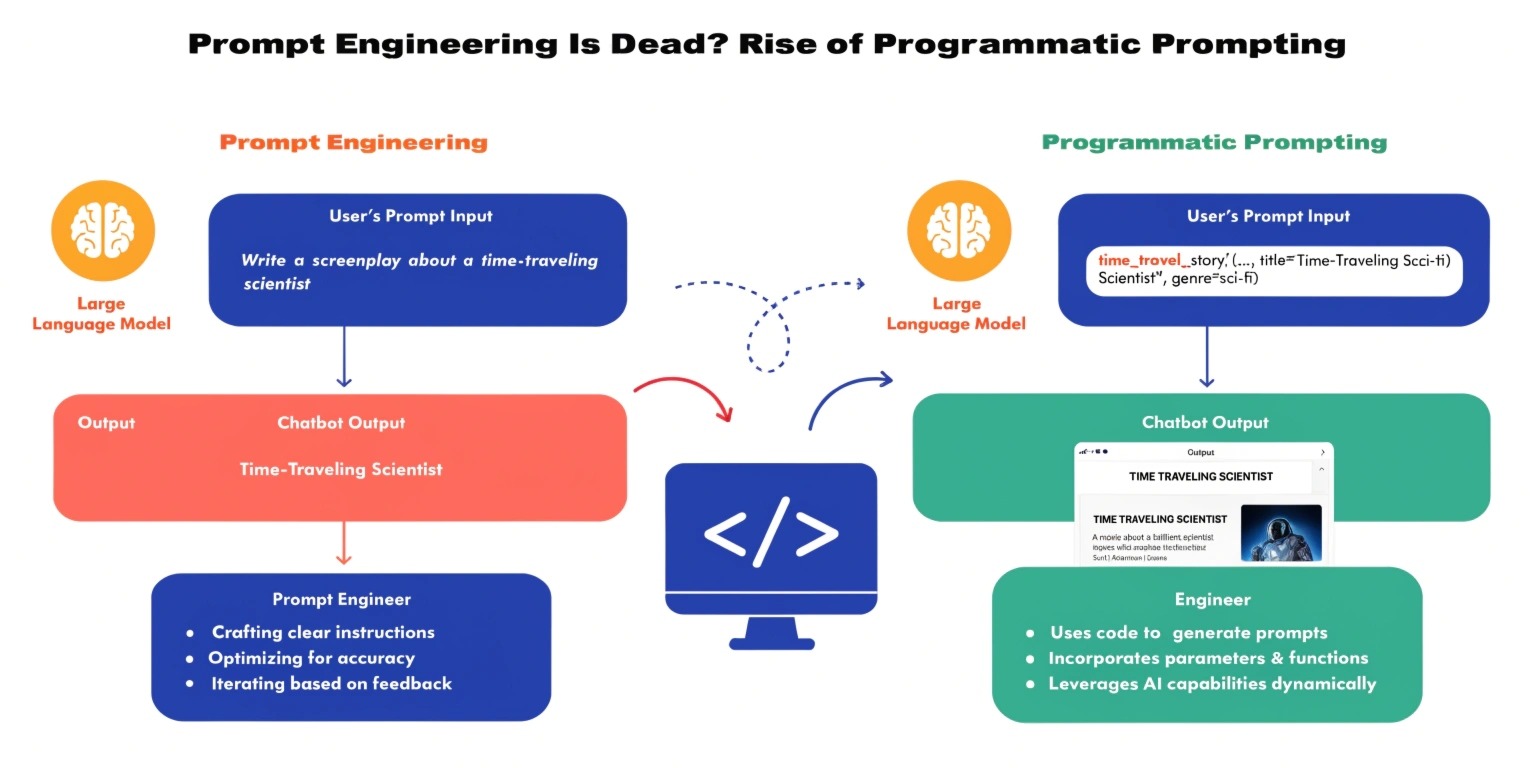

What Was Prompt Engineering?

Prompt engineering is the process of crafting natural language instructions to guide a large language model (LLM) toward desired outputs.

Examples include:

- role prompting

- few-shot examples

- chain-of-thought reasoning

- format instructions

It works well in demos and prototypes.

But production systems have different requirements:

- reliability

- repeatability

- accuracy

- security

- scalability

A manually written prompt cannot guarantee these.

Why?

Because LLMs are probabilistic systems, not deterministic programs.

The Core Problem

Imagine building:

- a legal document analyzer

- a medical support assistant

- a fintech reporting tool

You cannot rely on a single text prompt.

Even a perfect prompt will sometimes:

- hallucinate

- ignore instructions

- produce inconsistent format

- break JSON output

- invent data

This is where most early AI startups failed.

They tried to build software using only prompts.

But AI applications are not prompts.

They are systems.

What is Programmatic Prompting?

Programmatic prompting means:

Treating prompts as part of a software architecture rather than as standalone instructions.

Instead of one large prompt, you build a pipeline of controlled steps.

You do not ask the model to do everything at once.

You orchestrate it.

A modern LLM application typically contains:

- Input validation

- Retrieval (RAG)

- Structured prompt template

- Tool calling

- Output validation

- Post-processing

The model becomes a component inside software — not the software itself.

Example: Traditional vs Programmatic

Old Method (Prompt Engineering)

“Analyze this contract and summarize risks.”

Problems:

- misses clauses

- inconsistent output

- hallucinated legal risks

New Method (Programmatic Prompting)

Pipeline:

- Extract contract text

- Chunk the document

- Retrieve relevant clauses

- Ask LLM specific questions

- Force JSON response

- Validate schema

- Aggregate results

Now the system is reliable.

The difference is architecture, not wording.

Key Techniques in Programmatic Prompting

1. Prompt Templates

Prompts are parameterized like code:

Instead of rewriting prompts, you use variables:

- {company_name}

- {document_type}

- {required_format}

This removes randomness.

2. Retrieval Augmented Generation (RAG)

Rather than asking the model to “remember,” you provide context from a database.

The model no longer guesses.

It answers using real data.

This dramatically reduces hallucination.

3. Tool Calling

Modern LLMs can call:

- APIs

- databases

- calculators

- search engines

Example:

Instead of asking:

“Calculate revenue growth”

The model calls a function that computes it accurately.

The LLM reasons, but software calculates.

4. Structured Outputs

You force the model to return:

- JSON

- schema-based outputs

- validated formats

Then your application checks it before showing users.

No broken responses.

5. Agents & Workflow Orchestration

Instead of one model:

You create specialized agents:

- planner

- researcher

- executor

- validator

Each does one job.

This mirrors human organizations and increases reliability.

Why Prompt Engineering Alone Is Fading

Prompt engineering is similar to writing a clever command line.

Programmatic prompting is similar to building an operating system.

Companies learned an important lesson:

You cannot scale a business on prompts.

You scale it on architecture.

Hiring only a “prompt engineer” is now insufficient.

Companies need:

- AI engineers

- LLM application developers

- AI system designers

The skill is shifting from linguistics → software engineering.

Where Prompt Engineering Still Matters

It is still useful for:

- prototyping ideas

- testing model capability

- improving responses inside a pipeline

But it is now a component, not the solution.

The Future of AI Development

The next generation of AI products will not be chatbots.

They will be:

- autonomous workflows

- decision systems

- AI copilots

- enterprise automation

And all of them depend on programmatic prompting.

The winning teams will not be those writing the smartest prompts —

they will be those designing the best systems.

Final Thoughts

Prompt engineering was the first phase of the LLM era.

Programmatic prompting is the second.

We are moving from:

“Talking to AI” → “Building with AI.”

Developers who understand orchestration, validation, and architecture will dominate the next wave of software development.

The future AI developer is not a prompt writer.

They are an AI system architect.