Multi-Tenant AI Infrastructure Design: Building Scalable and Secure AI Platforms

As Artificial Intelligence adoption accelerates across industries, businesses are rapidly building AI-powered platforms that serve multiple customers simultaneously. From AI SaaS products and enterprise copilots to generative AI applications and machine learning APIs, modern AI systems must support thousands—or even millions—of users efficiently.

This is where multi-tenant AI infrastructure becomes essential.

A multi-tenant AI architecture allows multiple users, organizations, or clients (tenants) to share the same infrastructure while maintaining isolation, security, scalability, and performance. Designing such systems is one of the most complex challenges in modern cloud and AI engineering.

In this blog, we will explore how multi-tenant AI infrastructure works, the core architectural components involved, major design challenges, and best practices for building scalable AI platforms.

What Is Multi-Tenant AI Infrastructure?

In traditional single-tenant systems:

- Each customer gets dedicated infrastructure.

- Resources are isolated completely.

- Operational costs are high.

In contrast, multi-tenant infrastructure allows multiple customers to share:

- Compute resources

- GPUs

- Databases

- APIs

- AI models

- Storage systems

While infrastructure is shared, tenant data and workloads remain logically isolated.

This model is widely used in:

- AI SaaS platforms

- LLM-powered applications

- Cloud AI services

- Enterprise AI products

- AI development platforms

The goal is to maximize efficiency while ensuring strong performance and security.

Why Multi-Tenant AI Systems Matter

AI infrastructure is extremely expensive, especially when running:

- Large Language Models (LLMs)

- GPU-intensive workloads

- Real-time inference systems

- Vector databases

- Distributed training pipelines

If companies allocate dedicated resources for every customer, costs become unsustainable.

Multi-tenant systems solve this by:

- Sharing expensive GPU infrastructure

- Improving resource utilization

- Reducing operational costs

- Scaling dynamically

- Simplifying deployment management

This architecture enables AI companies to serve large user bases efficiently without excessive infrastructure spending.

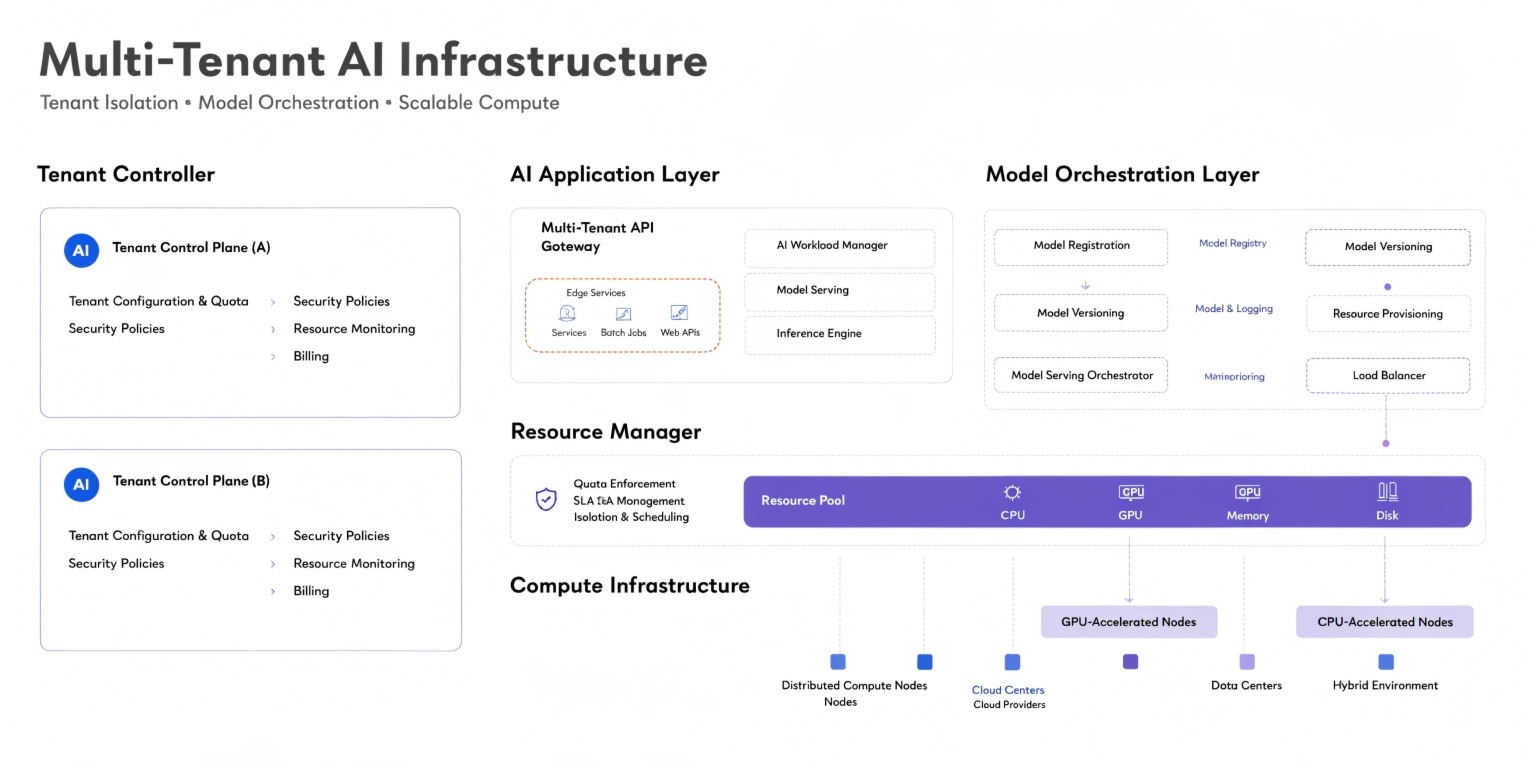

Core Components of Multi-Tenant AI Infrastructure

1. Tenant Isolation Layer

Tenant isolation is one of the most critical architectural requirements.

The system must ensure:

- Data privacy

- Secure API access

- Isolated workloads

- Role-based permissions

Isolation can occur at different levels:

- Database-level isolation

- Namespace isolation

- Container isolation

- Virtual machine isolation

Improper isolation may lead to data leaks or security vulnerabilities.

2. GPU Resource Management

AI workloads heavily depend on GPUs, which are costly and limited resources.

Modern AI platforms use orchestration systems to:

- Allocate GPU workloads dynamically

- Prioritize high-value requests

- Optimize inference scheduling

- Prevent GPU bottlenecks

Technologies such as Kubernetes help distribute workloads efficiently across clusters.

GPU sharing strategies often include:

- Time slicing

- Model batching

- Resource quotas

- Dynamic autoscaling

Efficient GPU management directly impacts platform profitability.

3. Model Serving Infrastructure

Serving AI models in multi-tenant environments requires highly optimized infrastructure.

The system must handle:

- High request concurrency

- Low latency inference

- Multiple AI models

- Version management

- Real-time scaling

Model serving layers often include:

- API gateways

- Inference servers

- Load balancers

- Caching systems

For generative AI applications, latency optimization becomes especially important because users expect near real-time responses.

4. Distributed Data Architecture

AI platforms process enormous volumes of structured and unstructured data.

A distributed data architecture helps:

- Scale storage efficiently

- Support real-time access

- Improve fault tolerance

- Enable high availability

Common infrastructure components include:

- Object storage systems

- Distributed databases

- Vector databases

- Streaming pipelines

Vector databases are particularly important for Retrieval-Augmented Generation (RAG) systems and semantic AI search.

Security Challenges in Multi-Tenant AI Systems

Security is significantly more complicated in shared AI environments.

Major risks include:

- Cross-tenant data exposure

- Prompt injection attacks

- API abuse

- Unauthorized model access

- Data leakage through inference outputs

To mitigate these risks, companies implement:

- End-to-end encryption

- Tenant-specific access controls

- API authentication

- Rate limiting

- AI monitoring systems

Security architecture must evolve continuously because AI attack surfaces are expanding rapidly.

Scalability and Performance Optimization

AI systems must handle unpredictable traffic spikes efficiently.

For example:

- Viral AI applications

- Enterprise batch workloads

- Large-scale inference requests

To support scalability, infrastructure often includes:

- Horizontal autoscaling

- Distributed load balancing

- Multi-region deployment

- Edge inference systems

Performance optimization also involves:

- Quantized models

- Caching frequent outputs

- Request batching

- Efficient token processing

Even small latency improvements can significantly reduce infrastructure costs at scale.

Monitoring and Observability

Observability is essential in multi-tenant AI environments.

Platforms must monitor:

- GPU usage

- Request latency

- Model accuracy

- Resource consumption

- Tenant-specific performance

AI observability tools help detect:

- Infrastructure failures

- Model drift

- Inference anomalies

- Security incidents

Without proper monitoring, debugging distributed AI systems becomes extremely difficult.

The Future of Multi-Tenant AI Infrastructure

The future of AI infrastructure is moving toward:

- Autonomous resource orchestration

- Serverless AI inference

- AI-native cloud platforms

- Edge AI deployment

- Self-optimizing infrastructure

As AI adoption grows, companies will increasingly focus on balancing:

- Cost efficiency

- Performance

- Scalability

- Security

- Reliability

Organizations that build strong multi-tenant AI systems will gain major competitive advantages in the AI economy.

Conclusion

Multi-tenant AI infrastructure is becoming the backbone of modern AI platforms. It enables companies to deliver scalable, secure, and cost-efficient AI services across multiple users and organizations while maximizing infrastructure utilization.

However, designing these systems requires advanced expertise in cloud architecture, GPU orchestration, distributed systems, security engineering, and AI deployment optimization.

As AI applications continue expanding globally, multi-tenant infrastructure design will remain one of the most important engineering disciplines shaping the future of scalable AI platforms.