Modern game engines are built to handle highly concurrent workloads—rendering, physics simulation, AI, input handling, and networking all run simultaneously. Traditional locking mechanisms (like mutexes) can create bottlenecks, increasing latency and reducing performance. To overcome this, developers turn to lock-free data structures, which enable safe concurrent access without blocking threads.

Among the most commonly used lock-free structures in game development are queues and ring buffers, both essential for building responsive, real-time systems.

What Are Lock-Free Data Structures?

Lock-free data structures are designed to allow multiple threads to operate on shared data without using locks. Instead of blocking threads, they rely on atomic operations and memory ordering guarantees provided by modern CPUs.

The key benefits include:

- No thread blocking

- Reduced latency

- Better CPU utilization

- Improved scalability on multi-core systems

Unlike lock-based systems, where threads may wait for access, lock-free systems ensure that at least one thread always makes progress.

Why Game Engines Need Lock-Free Structures

Game engines demand consistent frame rates (e.g., 60 FPS or higher). Even small delays caused by thread contention can result in frame drops or stuttering.

Lock-free structures help by:

- Eliminating contention between systems (render thread, logic thread, etc.)

- Enabling smooth data flow between subsystems

- Supporting real-time responsiveness

For example, a physics system can push updates to a queue while the rendering system consumes them without waiting.

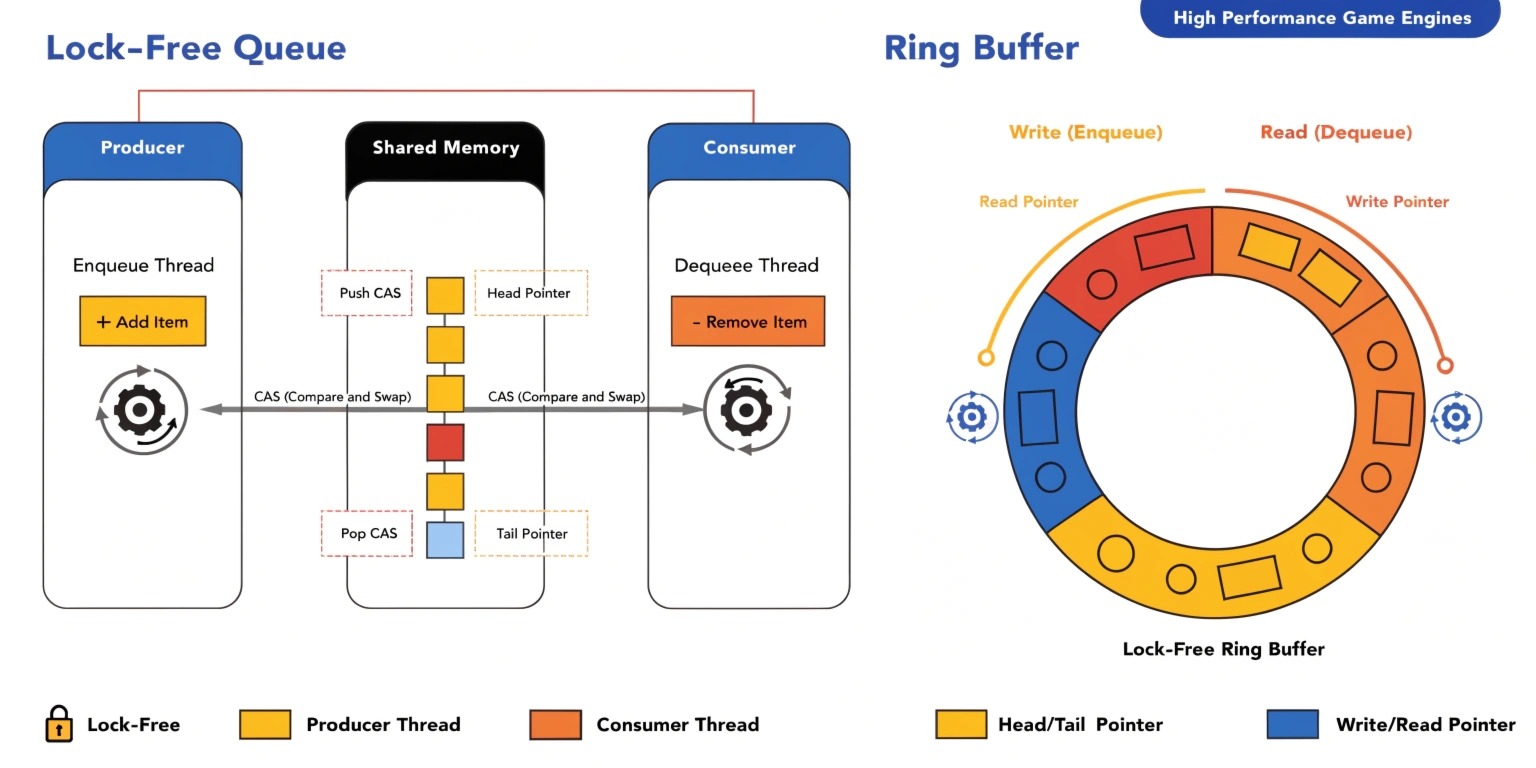

Lock-Free Queues

Overview

A lock-free queue allows multiple producers and consumers to enqueue and dequeue data concurrently without locks. These are widely used in task scheduling systems within game engines.

How It Works

Lock-free queues typically use atomic operations like:

- Compare-And-Swap (CAS)

- Atomic increment/decrement

A common implementation is the Michael-Scott queue, which uses linked nodes and atomic pointers.

Use Cases in Game Engines

- Task/job systems

- Event dispatch systems

- Asynchronous resource loading

- AI task scheduling

Advantages

- High throughput

- Minimal contention

- Scales well with multiple threads

Ring Buffers (Circular Buffers)

Overview

A ring buffer is a fixed-size circular data structure that uses a head and tail pointer to manage data. It is especially effective in single-producer, single-consumer (SPSC) scenarios.

How It Works

- Data is written at the head

- Data is read from the tail

- When the buffer reaches the end, it wraps around

With proper atomic handling, ring buffers can be made lock-free.

Use Cases in Game Engines

- Audio processing pipelines

- Input event buffering

- Network packet handling

- Frame data streaming

Advantages

- Cache-friendly memory layout

- Predictable performance

- No dynamic memory allocation

Lock-Free vs Lock-Based Structures

AspectLock-BasedLock-FreeThread BlockingYesNoLatencyHigherLowerComplexityEasierMore complexScalabilityLimitedHighRisk of DeadlocksPresentEliminated

While lock-free systems are more complex to implement, the performance benefits in real-time applications like games are substantial.

Implementation Considerations

1. Atomic Operations

Use atomic primitives provided by languages like C++ (std::atomic) to ensure thread safety.

2. Memory Ordering

Understanding memory ordering (relaxed, acquire, release) is crucial for correctness.

3. False Sharing

Avoid cache line contention by aligning data properly.

4. ABA Problem

A common issue in lock-free programming where a value changes and returns to its original state, misleading atomic checks. Solutions include version counters or hazard pointers.

Challenges of Lock-Free Programming

Despite their benefits, lock-free data structures are not easy to implement:

- Hard to debug

- Requires deep understanding of concurrency

- Architecture-dependent behavior

- Subtle bugs (race conditions, memory issues)

Because of this, many developers prefer using well-tested libraries rather than building from scratch.

Best Practices for Game Developers

- Use lock-free structures only where performance is critical

- Prefer SPSC ring buffers for simple pipelines

- Use existing libraries (e.g., concurrency frameworks)

- Profile performance before and after implementation

- Combine lock-free and lock-based approaches when appropriate

Future Trends in Game Engine Concurrency

As CPUs continue to increase core counts, lock-free programming will become even more important. Modern engines are moving toward:

- Job-based architectures

- Task graphs

- Data-oriented design (DOD)

- ECS (Entity Component Systems)

Lock-free queues and ring buffers will remain foundational tools in achieving high-performance concurrency.

Conclusion

Lock-free data structures like queues and ring buffers are essential for building high-performance game engines. By eliminating locks, they reduce latency, improve scalability, and ensure smooth real-time execution.

While they introduce complexity, the performance gains make them invaluable for modern game development. For developers aiming to build responsive, scalable systems, mastering lock-free programming is a significant step forward.