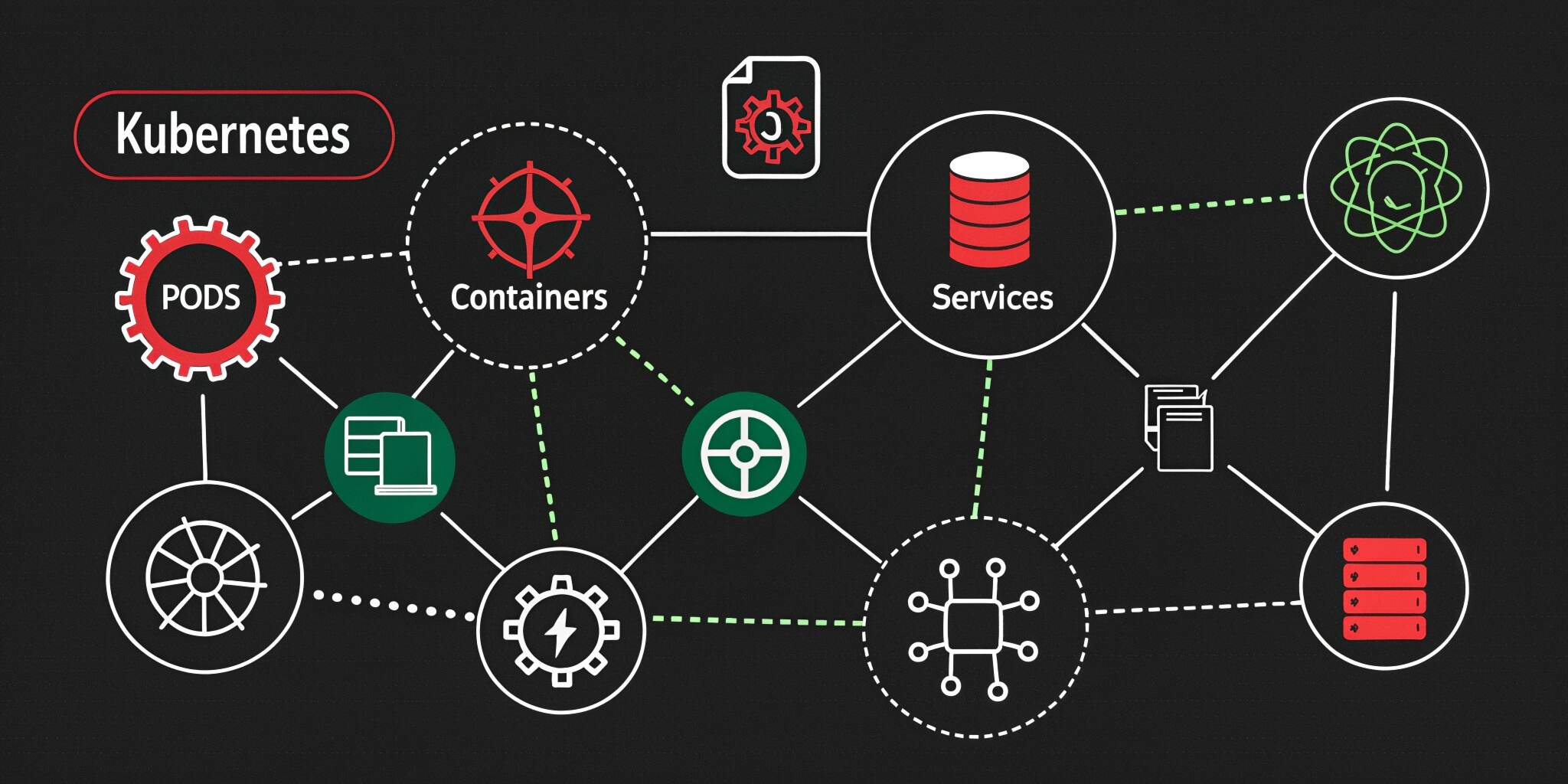

Kubernetes has become the backbone of modern cloud-native applications, enabling organizations to deploy, scale, and manage containerized workloads efficiently. However, like any distributed system, Kubernetes is not immune to failures. Understanding common failure scenarios and implementing effective recovery strategies is essential to ensure system reliability and high availability.

Why Failure Handling Matters in Kubernetes

In a Kubernetes environment, applications are distributed across multiple nodes and containers. While this architecture improves scalability, it also introduces complexity. Failures can occur at various levels—pods, nodes, networking, or even control plane components.

Without proper recovery strategies, these failures can lead to downtime, degraded performance, and poor user experience.

Common Kubernetes Failure Scenarios

1. Pod Failures and Crashes

Pods may fail due to application errors, resource limits, or misconfigurations. CrashLoopBackOff is a common issue where a container repeatedly fails to start.

2. Node Failures

A node can become unavailable due to hardware issues, network failures, or system crashes. This affects all pods running on that node.

3. Network Failures

Issues in the cluster network can disrupt communication between services, leading to partial or complete outages.

4. Resource Exhaustion

Insufficient CPU, memory, or storage can cause pods to be evicted or throttled, impacting application performance.

5. Control Plane Failures

Failures in critical components like the API server, scheduler, or etcd can disrupt cluster operations.

6. Configuration Errors

Incorrect YAML configurations, broken deployments, or faulty updates can introduce instability into the system.

Recovery Strategies for Kubernetes Failures

1. Self-Healing with ReplicaSets and Deployments

Kubernetes automatically replaces failed pods using ReplicaSets. Ensure that your deployments define the desired number of replicas to maintain availability.

2. Liveness and Readiness Probes

Configure probes to detect unhealthy containers:

- Liveness probes restart containers when they fail

- Readiness probes ensure traffic is only sent to healthy pods

These mechanisms prevent faulty applications from affecting users.

3. Node Recovery and Auto-Scheduling

When a node fails, Kubernetes reschedules pods onto healthy nodes. To support this:

- Use multiple nodes across availability zones

- Enable cluster autoscaling

4. Resource Management

Define resource requests and limits for containers to prevent resource starvation. Use Horizontal Pod Autoscaling (HPA) to scale based on demand.

5. Backup and Restore Strategies

Regularly back up critical data, especially etcd (Kubernetes’ key-value store). In case of failure, backups allow quick restoration of cluster state.

6. Rolling Updates and Rollbacks

Use rolling updates to deploy changes gradually. If issues arise, Kubernetes allows quick rollbacks to a stable version.

7. Network Resilience

Implement service meshes or robust networking solutions to handle failures gracefully. Retry mechanisms and circuit breakers can improve reliability.

Monitoring and Observability

Effective monitoring is crucial for identifying and responding to failures:

- Use tools like Prometheus and Grafana for metrics

- Implement centralized logging (e.g., ELK stack)

- Set up alerts for anomalies and failures

Observability helps teams detect issues early and respond proactively.

Best Practices for Resilience

- Design for Failure: Assume components will fail and plan accordingly

- Use Multi-Zone Deployments: Distribute workloads across regions

- Implement Chaos Engineering: Test failure scenarios intentionally

- Secure Configurations: Avoid misconfigurations through validation tools

- Automate Recovery प्रक्रियाएँ: Reduce manual intervention

These practices strengthen your Kubernetes environment against unexpected disruptions.

Real-World Example

Consider an e-commerce platform running on Kubernetes. During peak traffic, a node fails due to overload. Without proper strategies, this could crash the entire system. However, with autoscaling, pod replication, and load balancing in place, traffic is redistributed, and new pods are created automatically—ensuring uninterrupted service.

Future Trends in Kubernetes Resilience

As Kubernetes evolves, new tools and techniques are emerging:

- AI-driven anomaly detection

- Advanced autoscaling strategies

- Serverless Kubernetes platforms

- Improved multi-cluster management

These innovations aim to simplify failure handling and improve system reliability.

Conclusion

Kubernetes failure scenarios are inevitable, but downtime is not. By understanding potential issues and implementing robust recovery strategies, organizations can build resilient, high-performing systems.

From self-healing mechanisms to proactive monitoring, Kubernetes provides powerful tools to handle failures effectively. The key lies in combining these features with best practices to ensure seamless and reliable application delivery in today’s cloud-native world.