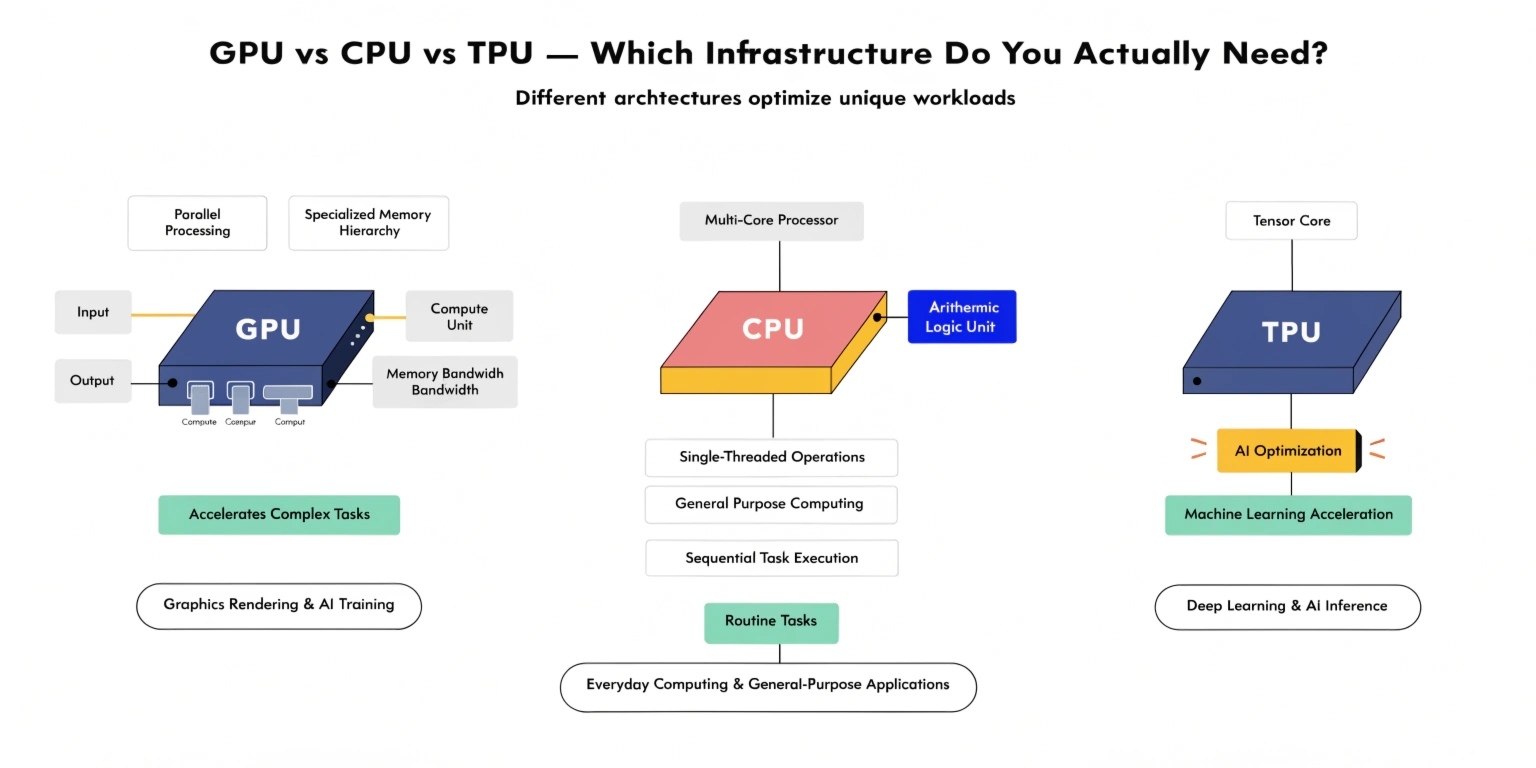

Modern computing workloads—especially in artificial intelligence and large-scale data processing—require powerful hardware infrastructure. Developers and organizations often face a critical decision: should they rely on CPUs, GPUs, or TPUs?

Each processor type is designed for different workloads and performance goals. Choosing the right infrastructure can significantly affect training speed, operational cost, and system scalability. Understanding how these processors work helps businesses and engineers make smarter infrastructure decisions.

Understanding the CPU

The Central Processing Unit (CPU) is the traditional brain of a computer. CPUs are designed for general-purpose computing and can efficiently handle a wide variety of tasks.

A typical CPU has a small number of powerful cores optimized for sequential processing. This makes it ideal for tasks that require strong single-thread performance or complex logic operations.

Common use cases for CPUs include:

- Web servers and backend APIs

- Operating systems and application logic

- Database processing

- Business software and enterprise applications

CPUs are extremely versatile, which is why they power most computers and cloud servers. However, when tasks require heavy parallel computation—such as training deep learning models—CPUs often struggle to match the performance of specialized processors.

Understanding the GPU

Graphics Processing Units (GPUs) were originally developed to render images and graphics for gaming and visual applications. However, GPUs excel at parallel computation, making them ideal for machine learning and scientific workloads.

Unlike CPUs, GPUs contain thousands of smaller cores designed to perform the same operation simultaneously across large datasets.

This architecture makes GPUs particularly powerful for:

- Deep learning model training

- Neural network computations

- Computer vision tasks

- Scientific simulations

- Cryptocurrency mining

- Video processing and rendering

For example, training large neural networks involves performing millions or billions of matrix operations. GPUs handle these operations much faster than CPUs because they process many calculations in parallel.

Today, GPUs are widely used in AI research, data science, and high-performance computing.

Understanding the TPU

Tensor Processing Units (TPUs) are specialized processors designed specifically for machine learning workloads. They were developed to accelerate tensor-based operations used in neural networks.

TPUs are highly optimized for matrix multiplication and deep learning operations, which makes them extremely efficient for large-scale AI model training.

Key advantages of TPUs include:

- Exceptional performance for neural network training

- High energy efficiency

- Optimized architecture for tensor operations

- Tight integration with machine learning frameworks

TPUs are commonly used in cloud-based machine learning platforms and are especially effective when training very large models.

However, TPUs are less flexible than CPUs or GPUs because they are designed primarily for machine learning tasks.

Performance Comparison

Each processor type offers different strengths depending on the workload.

CPU Strengths

- Excellent for general-purpose tasks

- Strong single-thread performance

- Flexible and widely supported

- Ideal for backend services and application logic

GPU Strengths

- Massive parallel processing power

- Faster training for deep learning models

- Suitable for graphics and compute-heavy workloads

- Widely used in AI development

TPU Strengths

- Highly optimized for tensor operations

- Extremely fast for large AI models

- High efficiency for deep learning training

- Ideal for large-scale machine learning pipelines

Cost Considerations

Infrastructure cost plays a major role when selecting computing hardware.

CPUs are the most affordable and widely available option. They are included in almost all cloud computing instances.

GPUs are more expensive due to their specialized hardware capabilities. However, the speed improvements they provide for machine learning workloads often justify the cost.

TPUs can be the most cost-effective option for large-scale AI training tasks, but they are typically available only through specific cloud providers.

Organizations must balance performance needs with budget constraints when choosing infrastructure.

When Should You Use Each?

Choosing the right processor depends on the type of workload you plan to run.

Use CPUs when:

- Running web applications or APIs

- Handling databases and backend logic

- Performing general computing tasks

Use GPUs when:

- Training machine learning models

- Running deep learning experiments

- Processing large datasets or images

Use TPUs when:

- Training very large neural networks

- Running large-scale AI pipelines

- Optimizing performance for tensor operations

Many modern AI systems actually combine all three processor types to create efficient and scalable pipelines.

Conclusion

CPUs, GPUs, and TPUs each play a crucial role in modern computing infrastructure. CPUs provide versatility for general workloads, GPUs deliver powerful parallel processing for AI and graphics tasks, and TPUs offer specialized acceleration for deep learning.

Understanding the strengths and limitations of each processor type allows developers and organizations to build more efficient systems. The right infrastructure choice ultimately depends on workload requirements, scalability goals, and budget constraints.

As artificial intelligence continues to grow, selecting the correct computing architecture will become increasingly important for building fast, scalable, and cost-effective applications.