Modern CPUs are incredibly fast, but their performance heavily depends on how efficiently memory is accessed. In multithreaded systems, one subtle yet critical issue that can degrade performance is false sharing. Even when threads operate on separate variables, they can unintentionally interfere with each other due to how CPU caches work.

Understanding and optimizing cache line usage is essential for building high-performance concurrent systems.

What is a Cache Line?

A CPU cache does not operate at the level of individual variables. Instead, it works with fixed-size blocks of memory called cache lines, typically 64 bytes in modern processors.

When a thread accesses a variable, the entire cache line containing that variable is loaded into the CPU cache. This design improves performance by leveraging spatial locality—but it can also create problems.

What is False Sharing?

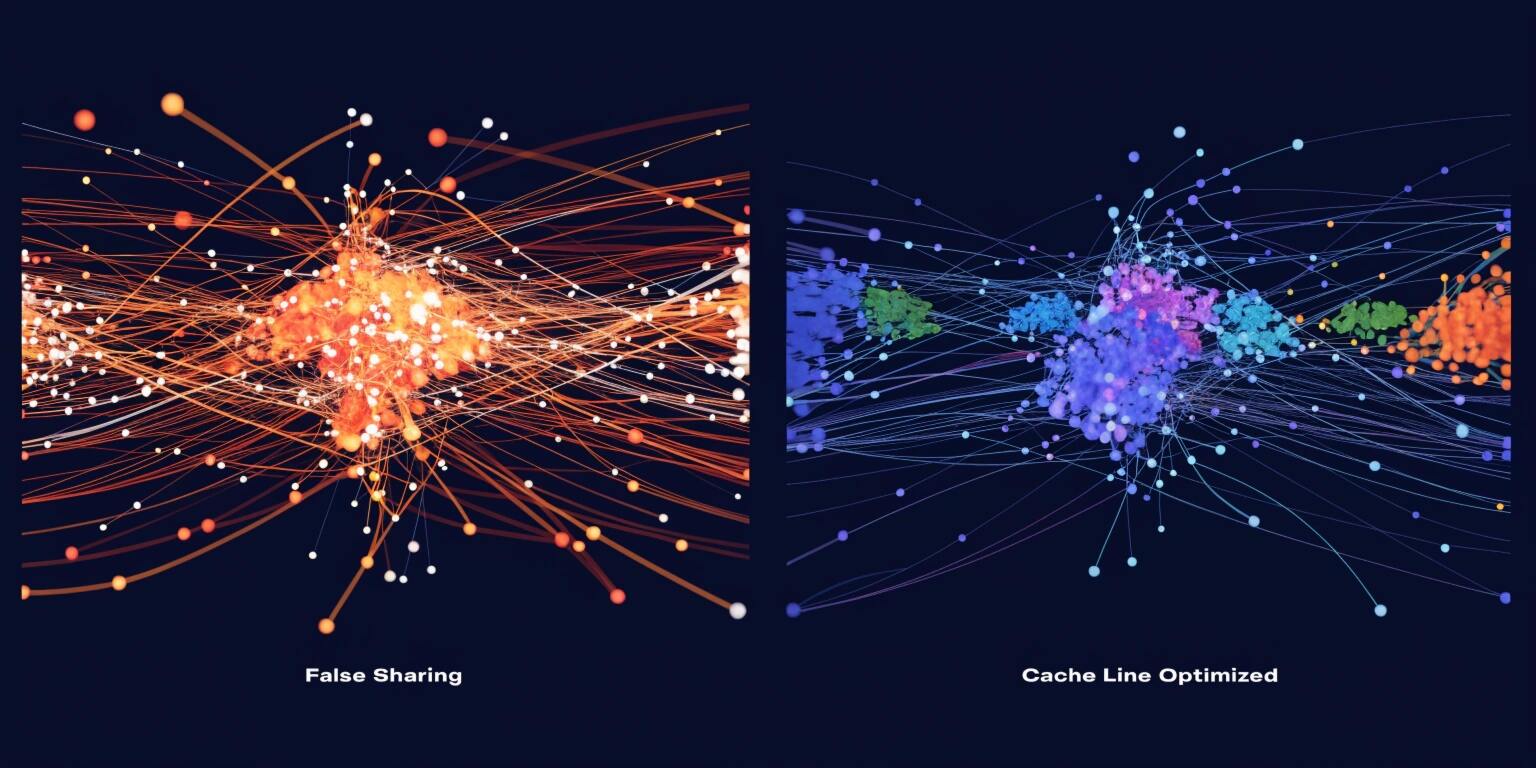

False sharing occurs when multiple threads modify different variables that reside on the same cache line. Even though the variables are independent, the CPU treats them as shared data.

This leads to:

- Frequent cache invalidations

- Increased memory traffic

- Reduced parallel efficiency

The root cause lies in cache coherence protocols, which ensure that all CPU cores have a consistent view of memory. When one thread updates a cache line, other cores must invalidate or update their copies—even if they don’t use the modified variable.

Why False Sharing Hurts Performance

In a multithreaded environment, threads ideally operate independently. False sharing breaks this independence.

Imagine two threads updating two separate counters stored next to each other in memory. If both counters fall within the same cache line:

- Thread A updates counter A → cache line invalidated for Thread B

- Thread B updates counter B → cache line invalidated for Thread A

This constant invalidation causes a performance bottleneck known as “cache line ping-pong.”

The result? Slower execution despite using multiple threads.

Identifying False Sharing

False sharing is difficult to detect because it does not produce errors—only performance degradation.

Common indicators include:

- Unexpected slowdown in multithreaded code

- High CPU usage with low throughput

- Performance not scaling with additional threads

Profiling tools like Intel VTune, perf, or flame graphs can help identify memory contention issues.

Techniques to Avoid False Sharing

1. Padding Variables

Padding ensures that frequently updated variables occupy separate cache lines.

Example (conceptual):

Instead of placing variables adjacently, add unused space between them so they don’t share a cache line.

Many languages provide alignment or padding utilities (e.g., @Contended in Java).

2. Cache Line Alignment

Align data structures to cache line boundaries.

This ensures that variables used by different threads are placed in separate cache lines from the start.

Low-level languages like C/C++ allow explicit control using alignment specifiers.

3. Use Thread-Local Storage

Thread-local variables eliminate sharing entirely.

Each thread works on its own copy of data, avoiding cache contention.

After computation, results can be combined (reduction phase).

4. Optimize Data Structures

Structure your data based on access patterns.

- Group read-heavy data together

- Separate write-heavy variables

- Avoid interleaving frequently modified fields

Designing memory layout intentionally can significantly reduce contention.

5. Minimize Shared Writes

Read operations are less problematic than writes.

Reducing the number of write operations to shared memory helps minimize cache invalidations.

Techniques include:

- Using immutable data

- Applying batching strategies

- Leveraging lock-free algorithms

Real-World Example

Consider a metrics system where multiple threads update counters.

A naive implementation stores all counters in a single array. This can lead to false sharing if adjacent counters are updated by different threads.

An optimized version assigns each thread its own padded counter, ensuring no two threads share the same cache line. This dramatically improves throughput.

Impact on High-Performance Systems

False sharing is especially critical in:

- Game engines

- Financial trading systems

- Real-time analytics platforms

- High-frequency data processing pipelines

In such systems, even small inefficiencies can lead to significant performance loss.

Best Practices for Cache Optimization

- Understand your hardware (cache size, line size)

- Design memory layout deliberately

- Profile regularly

- Avoid premature optimization—but fix bottlenecks when identified

Optimization at the cache level requires a balance between performance and code maintainability.

Conclusion

False sharing is a hidden performance killer in multithreaded systems. While it doesn’t affect correctness, it can severely limit scalability and efficiency.

By understanding how cache lines work and applying techniques like padding, alignment, and thread-local storage, developers can eliminate unnecessary contention and unlock the full potential of parallel processing.

In high-performance applications, these low-level optimizations can make the difference between average and exceptional performance.