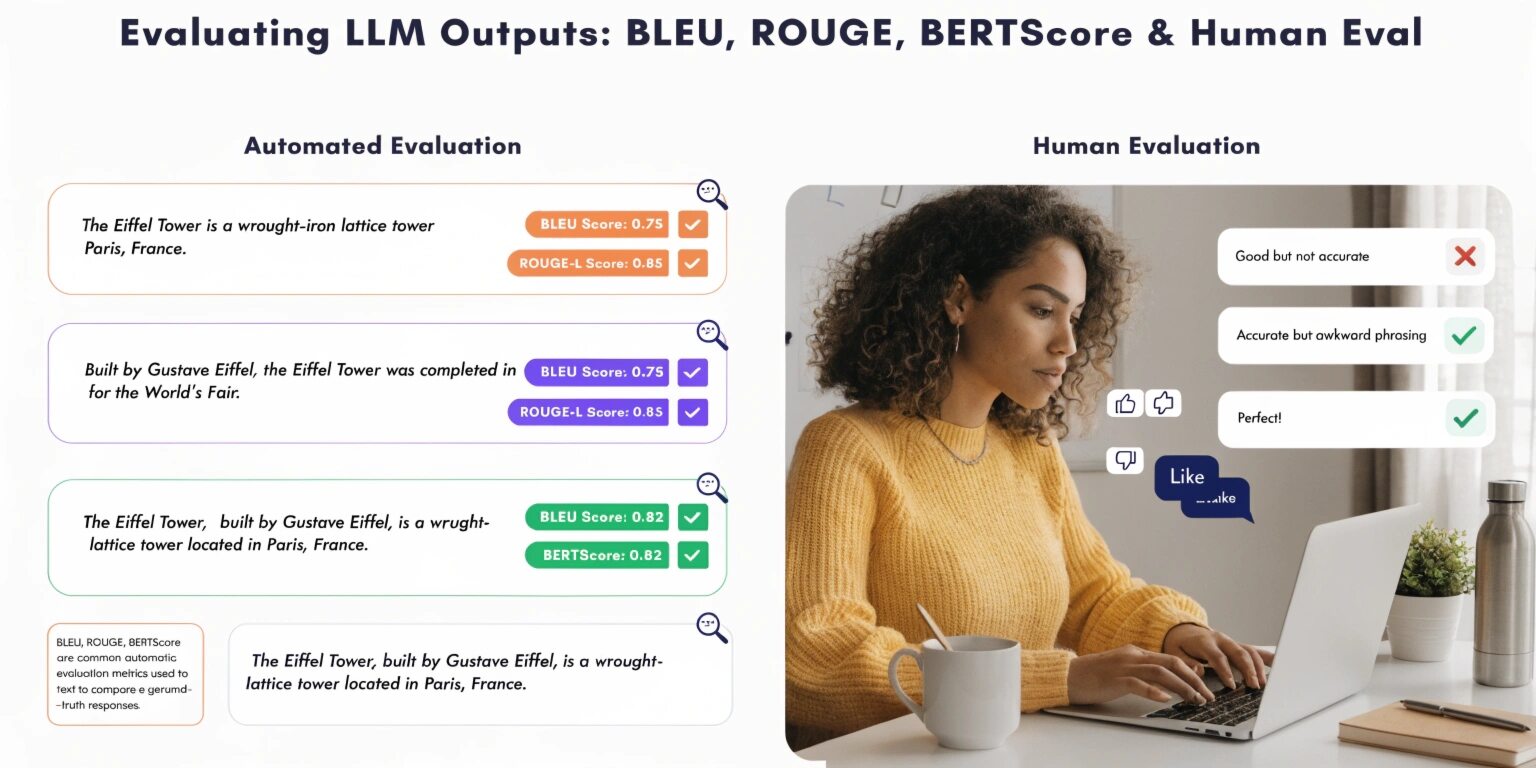

Evaluating the outputs of Large Language Models (LLMs) is a critical yet complex task in modern AI systems. Unlike traditional software, where correctness is binary, LLM outputs are often subjective, context-dependent, and open-ended. To measure their effectiveness, developers and researchers rely on a combination of automated metrics and human evaluation.

In this blog, we explore four widely used evaluation approaches: BLEU, ROUGE, BERTScore, and human evaluation, along with their strengths and limitations.

1. BLEU (Bilingual Evaluation Understudy)

BLEU is one of the earliest and most widely used metrics for evaluating text generation, especially in machine translation. It measures the overlap between the generated text and a reference text using n-gram precision.

How it works:

BLEU calculates how many words or phrases (n-grams) in the generated output match those in the reference output. It also applies a brevity penalty to discourage overly short responses.

Advantages:

- Simple and fast to compute

- Standardized metric for benchmarking

- Useful for translation tasks

Limitations:

- Focuses only on exact word matches

- Ignores semantic meaning

- Penalizes valid paraphrases

For example, “The cat is on the mat” and “A cat sits on the mat” may convey the same meaning but receive a low BLEU score due to different wording.

2. ROUGE (Recall-Oriented Understudy for Gisting Evaluation)

ROUGE is commonly used for text summarization tasks. Unlike BLEU, which focuses on precision, ROUGE emphasizes recall—how much of the reference text is captured in the generated output.

Key variants:

- ROUGE-N: Measures n-gram overlap

- ROUGE-L: Uses longest common subsequence

- ROUGE-S: Considers skip-grams

Advantages:

- Effective for summarization evaluation

- Captures coverage of important content

- Easy to interpret

Limitations:

- Still relies on lexical overlap

- Does not fully capture meaning

- Can reward longer outputs unnecessarily

ROUGE works best when there is a clear reference summary, but struggles with creative or highly variable outputs.

3. BERTScore

BERTScore represents a major advancement in evaluation metrics by leveraging contextual embeddings from transformer models. Instead of exact word matching, it compares semantic similarity between generated and reference texts.

How it works:

Each word in the generated text is matched with the most similar word in the reference text using vector embeddings. The similarity scores are then aggregated.

Advantages:

- Captures semantic meaning

- Handles paraphrasing effectively

- More aligned with human judgment

Limitations:

- Computationally expensive

- Dependent on pre-trained models

- Can be sensitive to model biases

For modern LLM applications, BERTScore provides a more nuanced and meaningful evaluation compared to BLEU and ROUGE.

4. Human Evaluation

Despite advances in automated metrics, human evaluation remains the gold standard for assessing LLM outputs.

Key criteria used by humans:

- Fluency: Is the text grammatically correct?

- Coherence: Does it make logical sense?

- Relevance: Does it answer the question?

- Factual accuracy: Is the information correct?

Advantages:

- Captures real-world quality

- Evaluates context and nuance

- Flexible across use cases

Limitations:

- Time-consuming and expensive

- Subjective and inconsistent

- Hard to scale

Human evaluation is especially important for applications like chatbots, content generation, and customer support, where user experience matters more than strict textual similarity.

Choosing the Right Evaluation Method

No single metric is perfect. The choice depends on your use case:

- Machine Translation: BLEU + BERTScore

- Summarization: ROUGE + Human Evaluation

- Conversational AI: BERTScore + Human Evaluation

- Creative Writing: Primarily Human Evaluation

A hybrid approach often yields the best results. Automated metrics provide scalability, while human evaluation ensures quality and relevance.

Best Practices for LLM Evaluation

- Use multiple metrics: Avoid relying on a single score

- Align metrics with goals: Choose metrics based on your application

- Include human feedback: Especially for user-facing systems

- Continuously evaluate: Monitor performance over time

- Test diverse inputs: Ensure robustness across scenarios

Final Thoughts

Evaluating LLM outputs is not just about numbers—it’s about understanding how well the model meets user expectations. While BLEU and ROUGE offer quick insights, and BERTScore adds semantic depth, human evaluation remains essential for capturing real-world performance.

As LLMs continue to evolve, evaluation techniques must also advance, combining automation with human judgment to ensure reliable and meaningful results.